Index

Lecture6

Note for Coursera Machine Learning made by Andrew Ng.

Logistic Regression

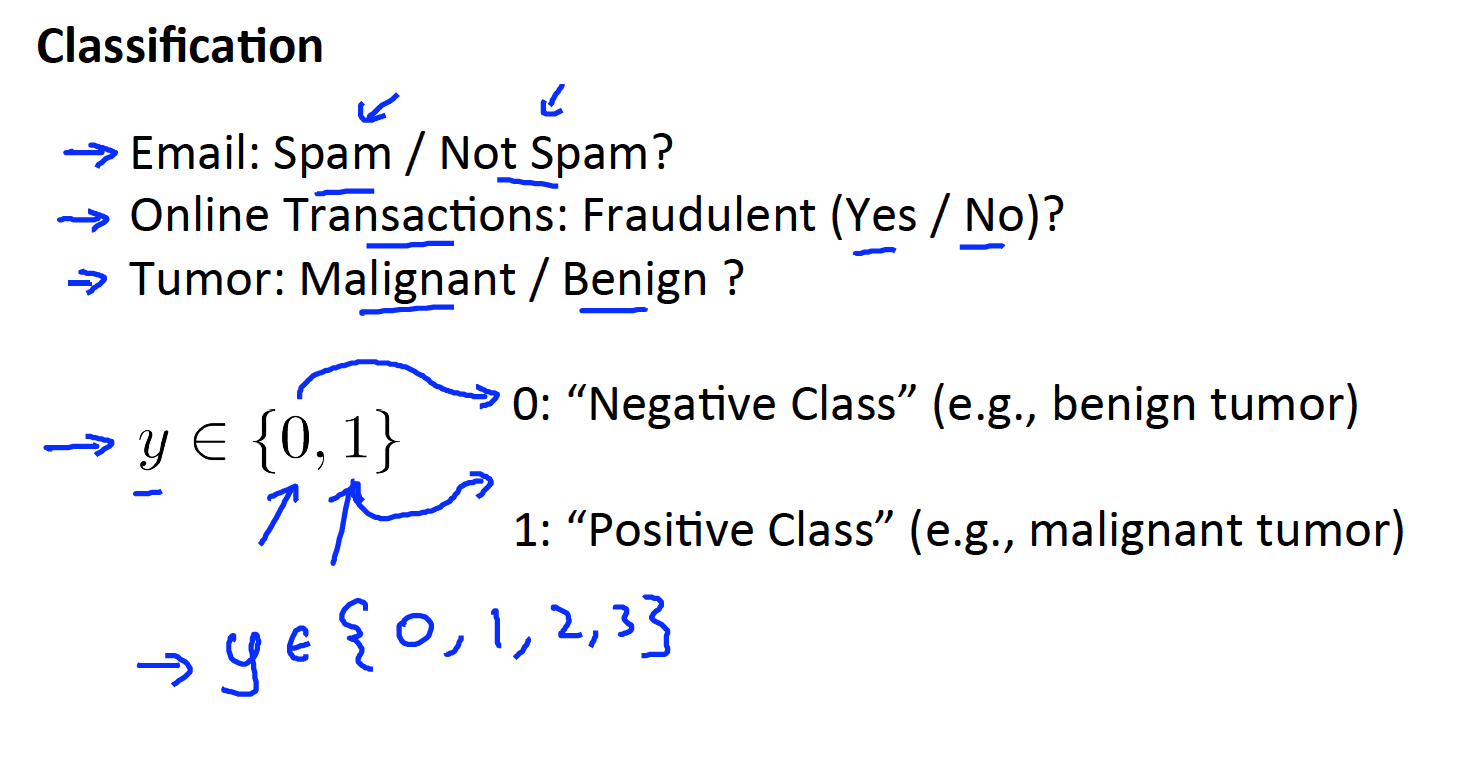

Classification

- The example below is a binary classification (0,1). Which means there are only two status in our classes.

one way to classify is to set a threshold classifier for the

.

For example:- If

, pridict “y = 1” - If

, pridict “y = 0”

- If

However, the

can be > 1 or < 0

Hence, we introduce Logistic Regression to make sure

Link to coursera section

https://www.coursera.org/learn/machine-learning/supplement/fDCQp/classification

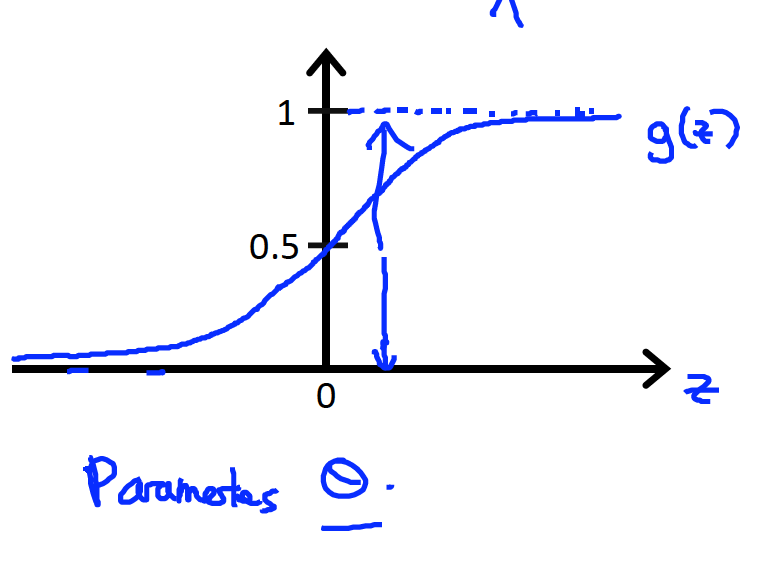

Hypothesis Representation

Logistic Regression Model

Since we want

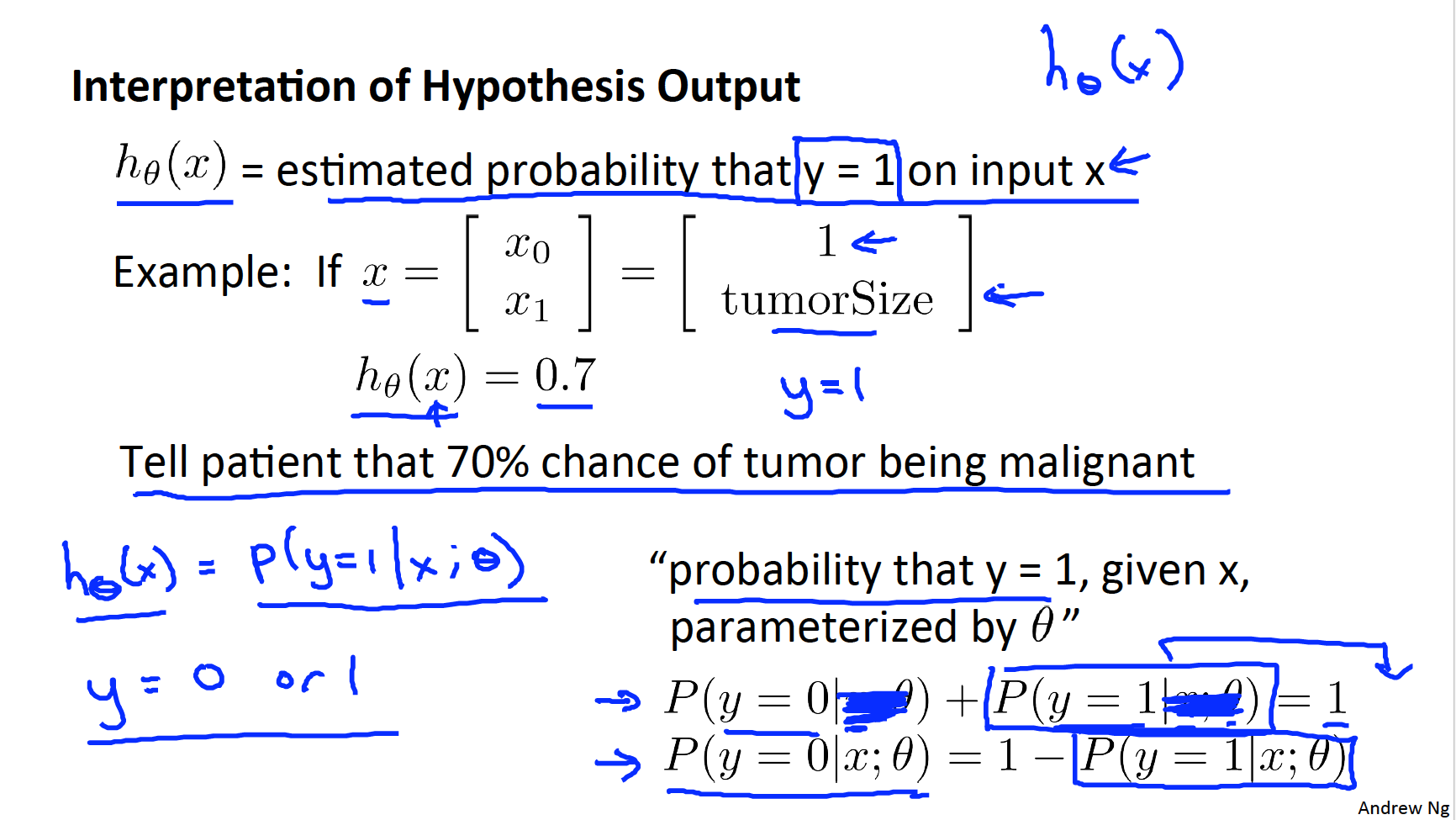

Interpretation of Hypothesis Output

Link to coursera section

https://www.coursera.org/learn/machine-learning/supplement/AqSH6/hypothesis-representation

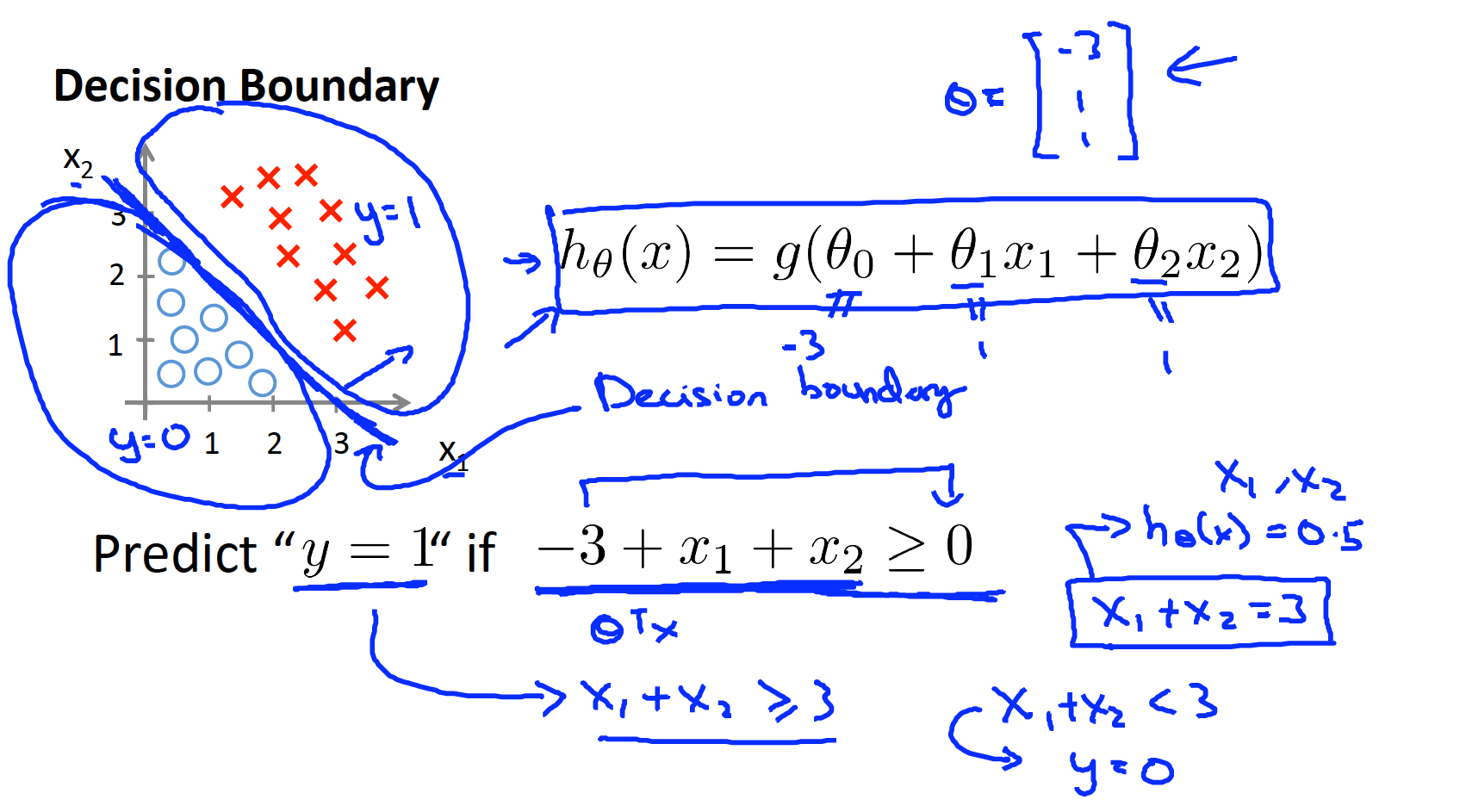

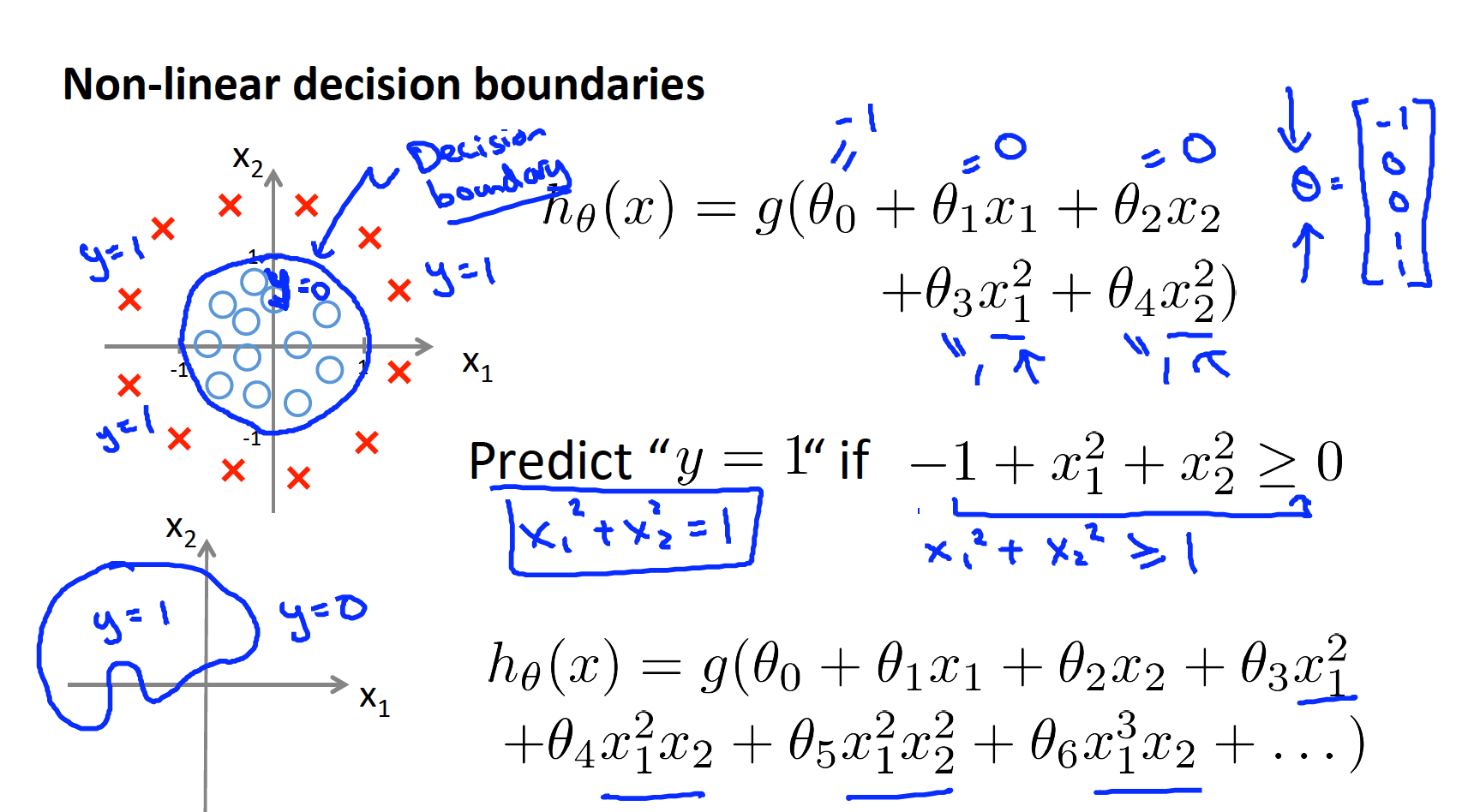

Decision boundary

Link to coursera section

https://www.coursera.org/learn/machine-learning/supplement/N8qsm/decision-boundary

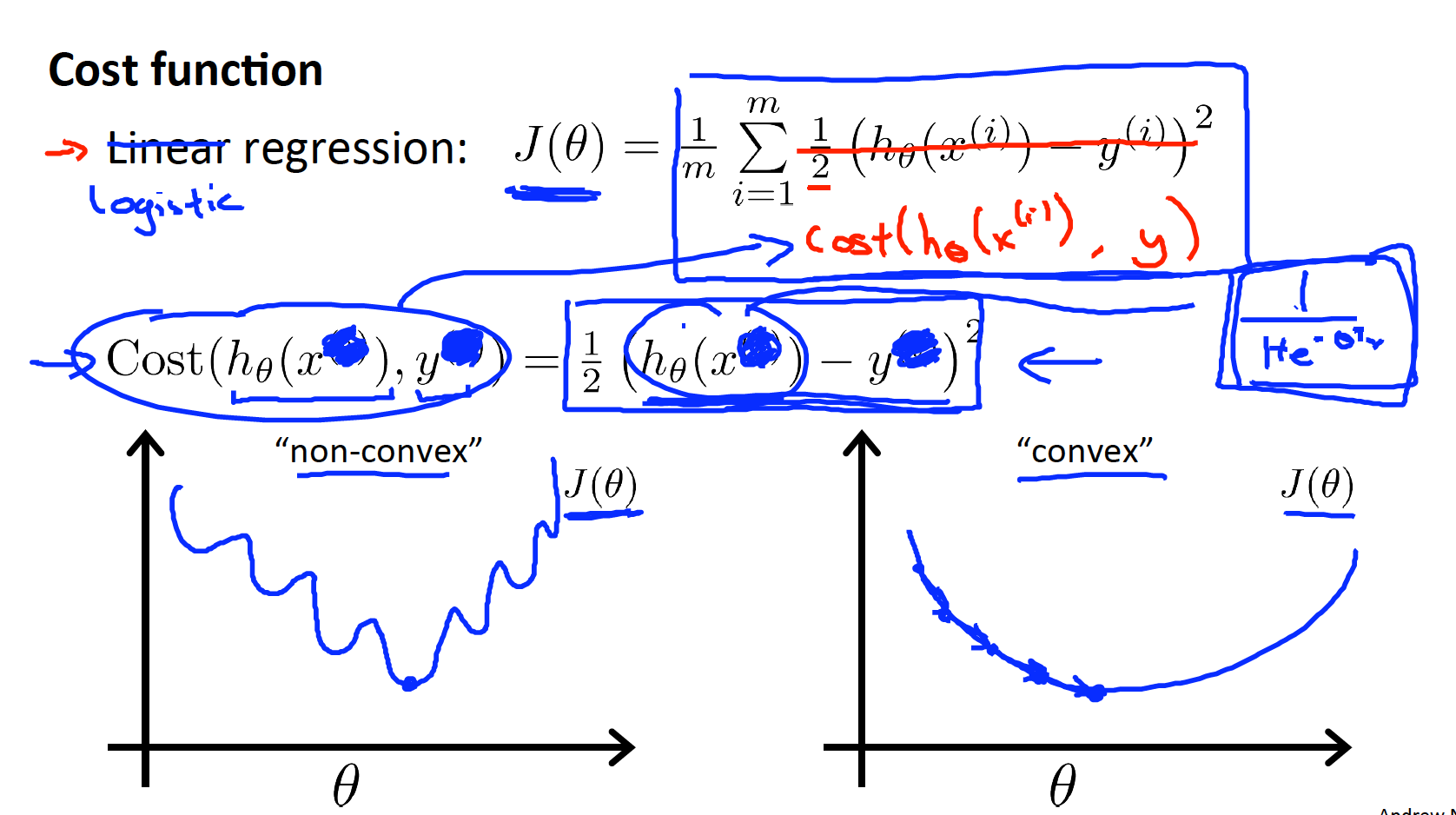

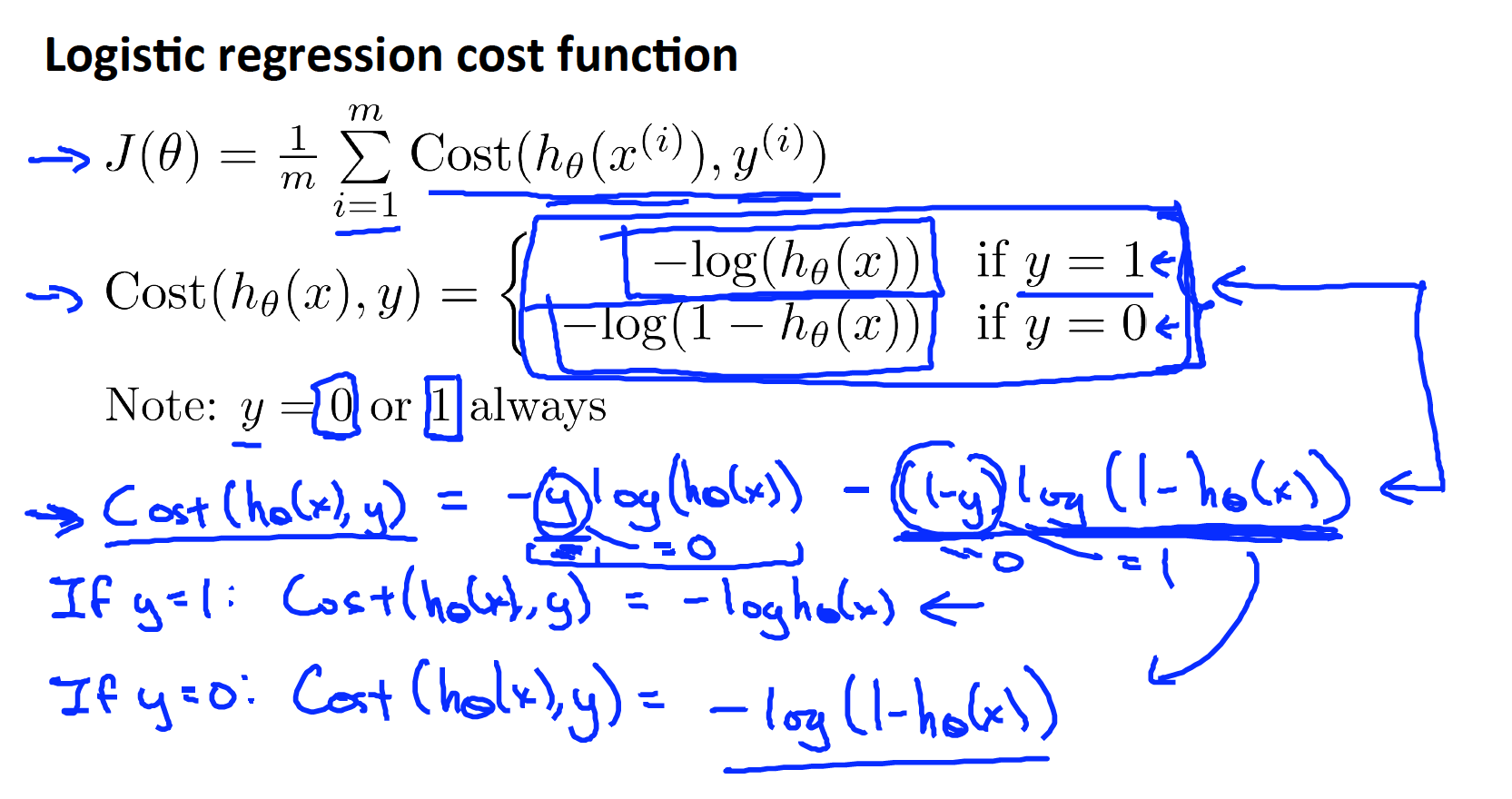

Cost function

- If we still apply squared error equation as our cost function, it will become “non-convex”, which is not ideal for minCost function algorighms (eg. Gradient descent). Hence, we need to find another function under sigmoid which is convex as our new cost function for logistic regression. (see below)

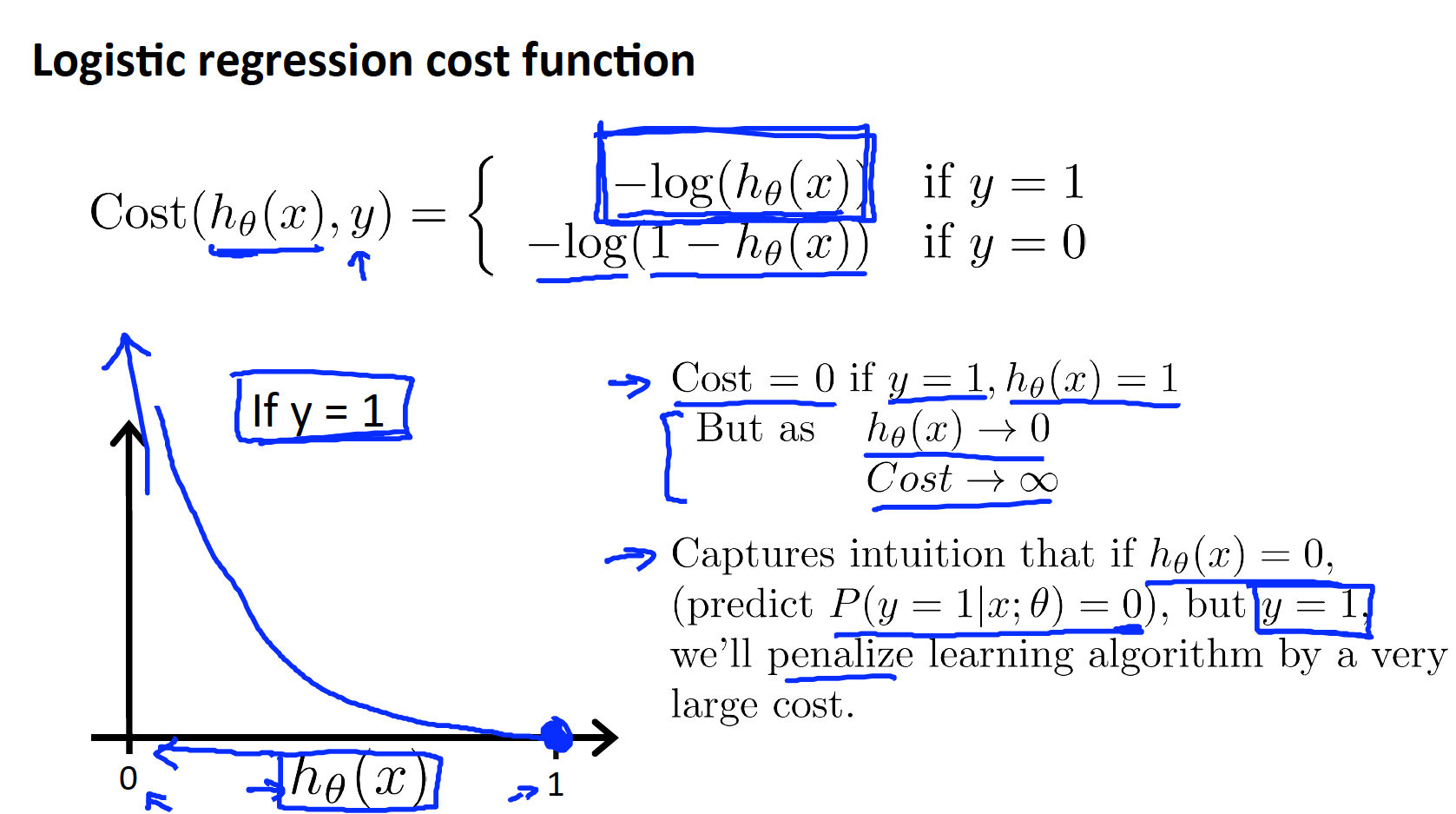

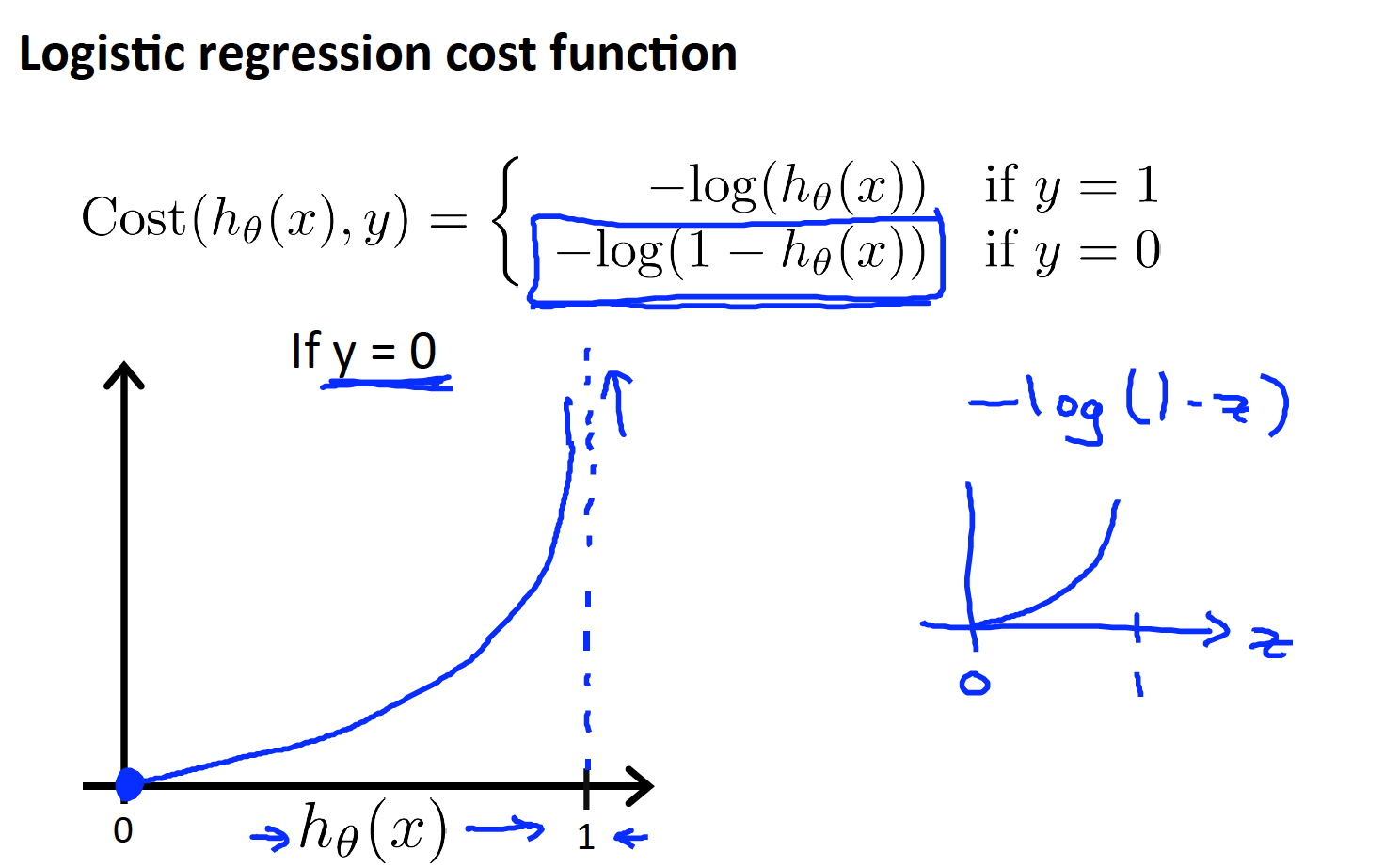

New cost function chosen for logistic regression

Link to coursera section

https://www.coursera.org/learn/machine-learning/supplement/bgEt4/cost-function

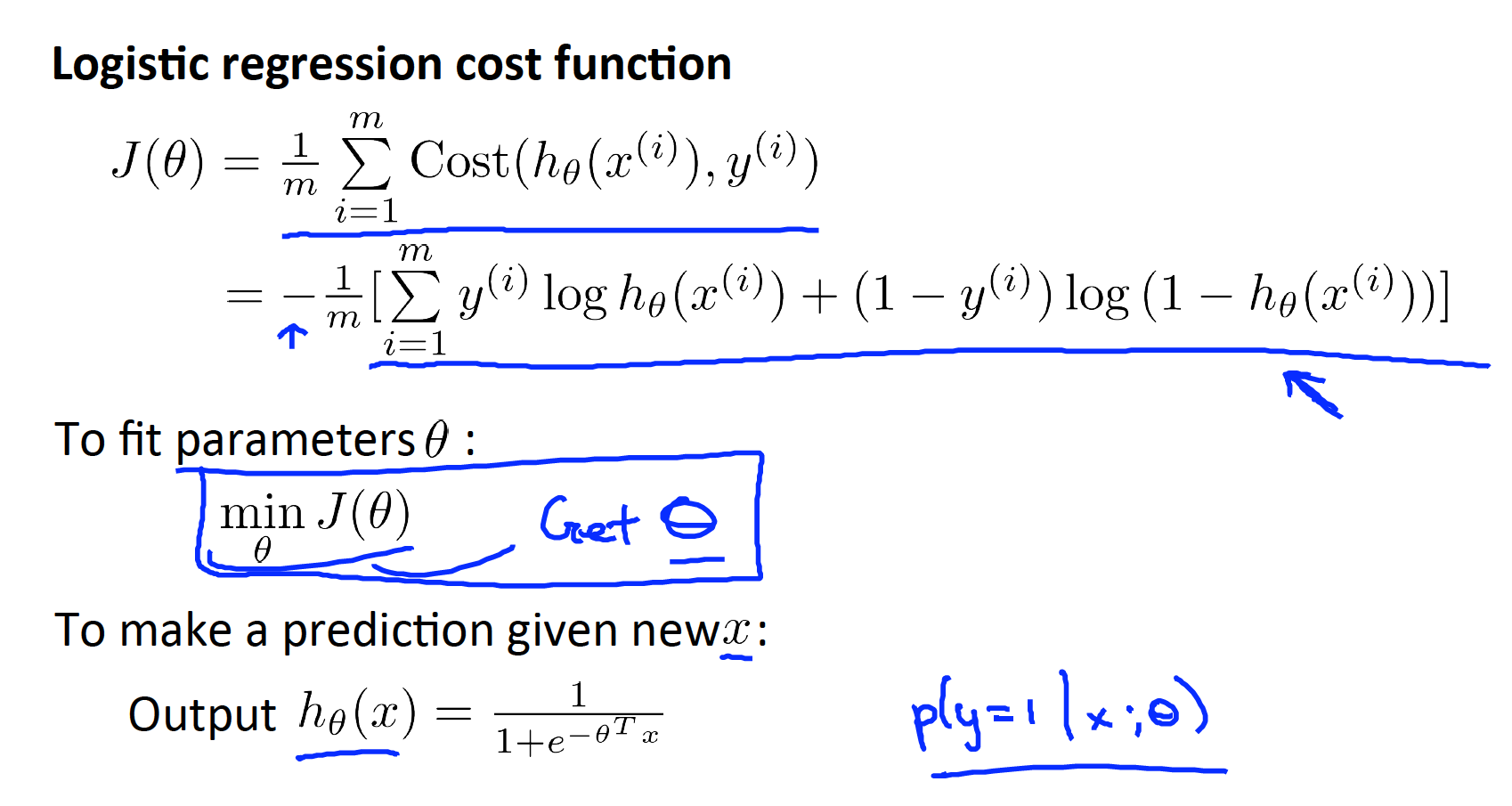

Simplified cost function and gradient descent

Simplify cost function

Hence, the final Cost function for Logistic regression is as follows

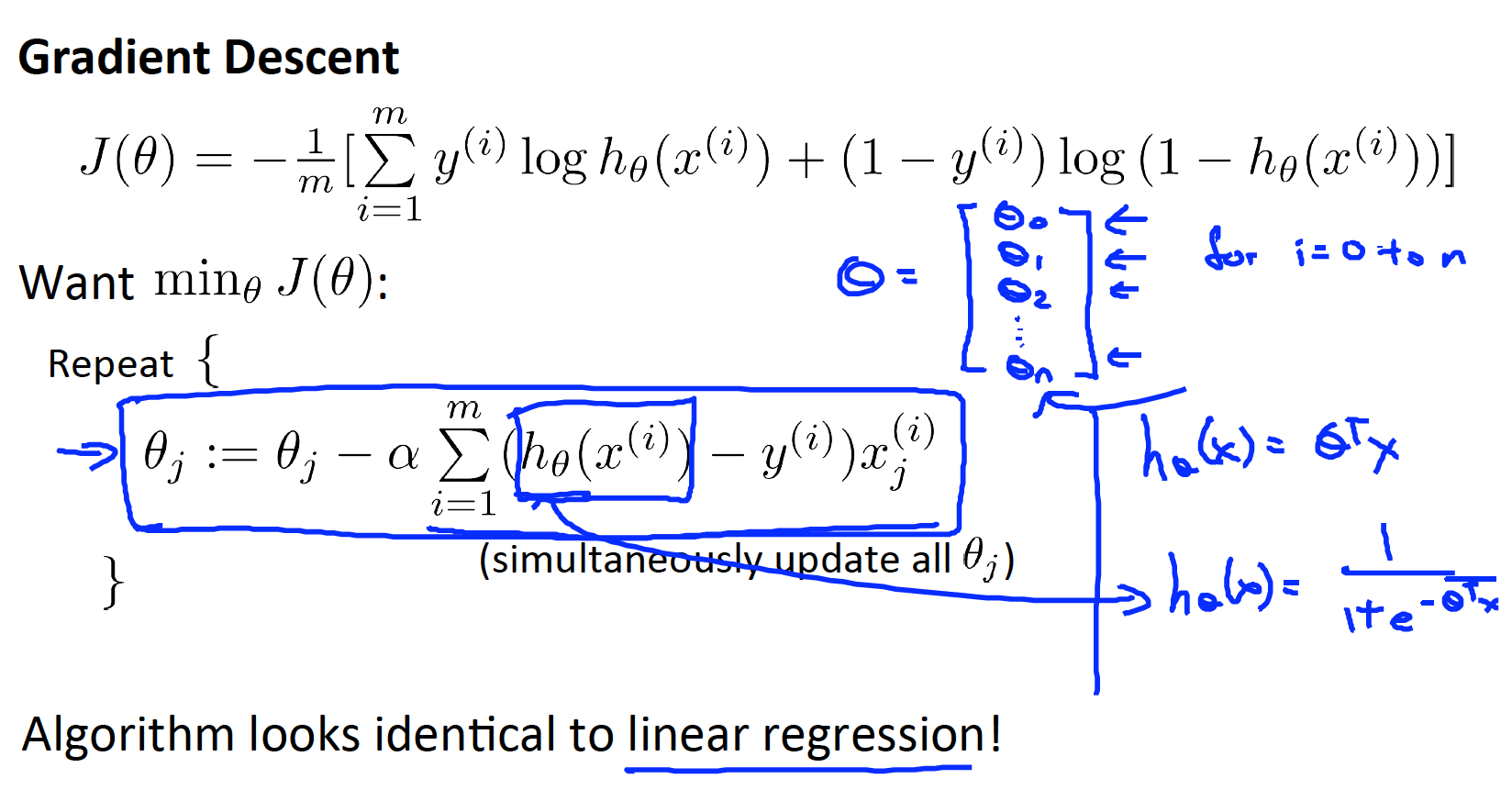

Gradient descent

- Proof see as below

- Link*:https://medium.com/analytics-vidhya/derivative-of-log-loss-function-for-logistic-regression-9b832f025c2d

Link to coursera section

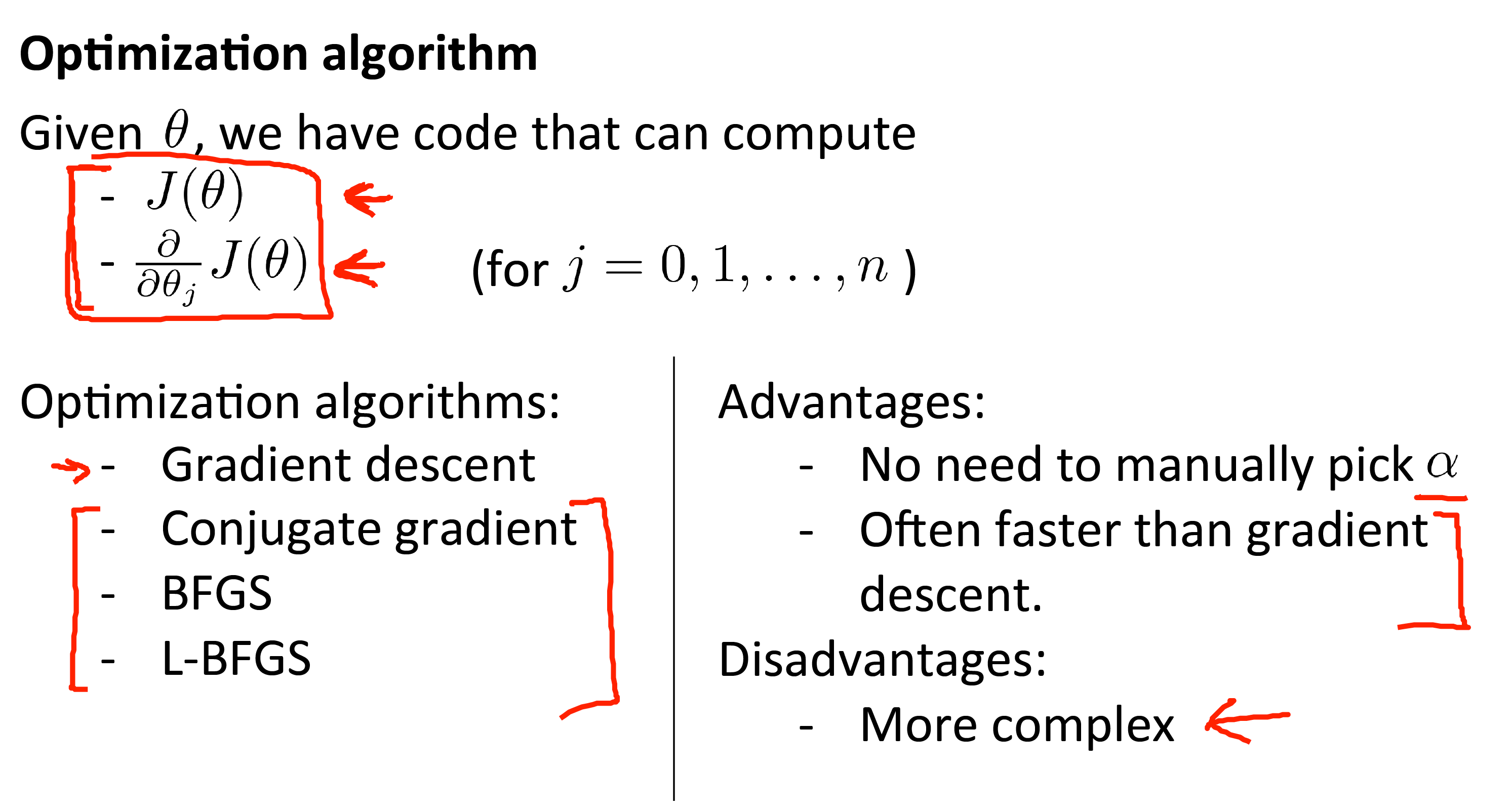

Advanced optimization

Check coursera section for Matlab/Octave example code

Link to coursera section

https://www.coursera.org/learn/machine-learning/supplement/cmjIc/advanced-optimization

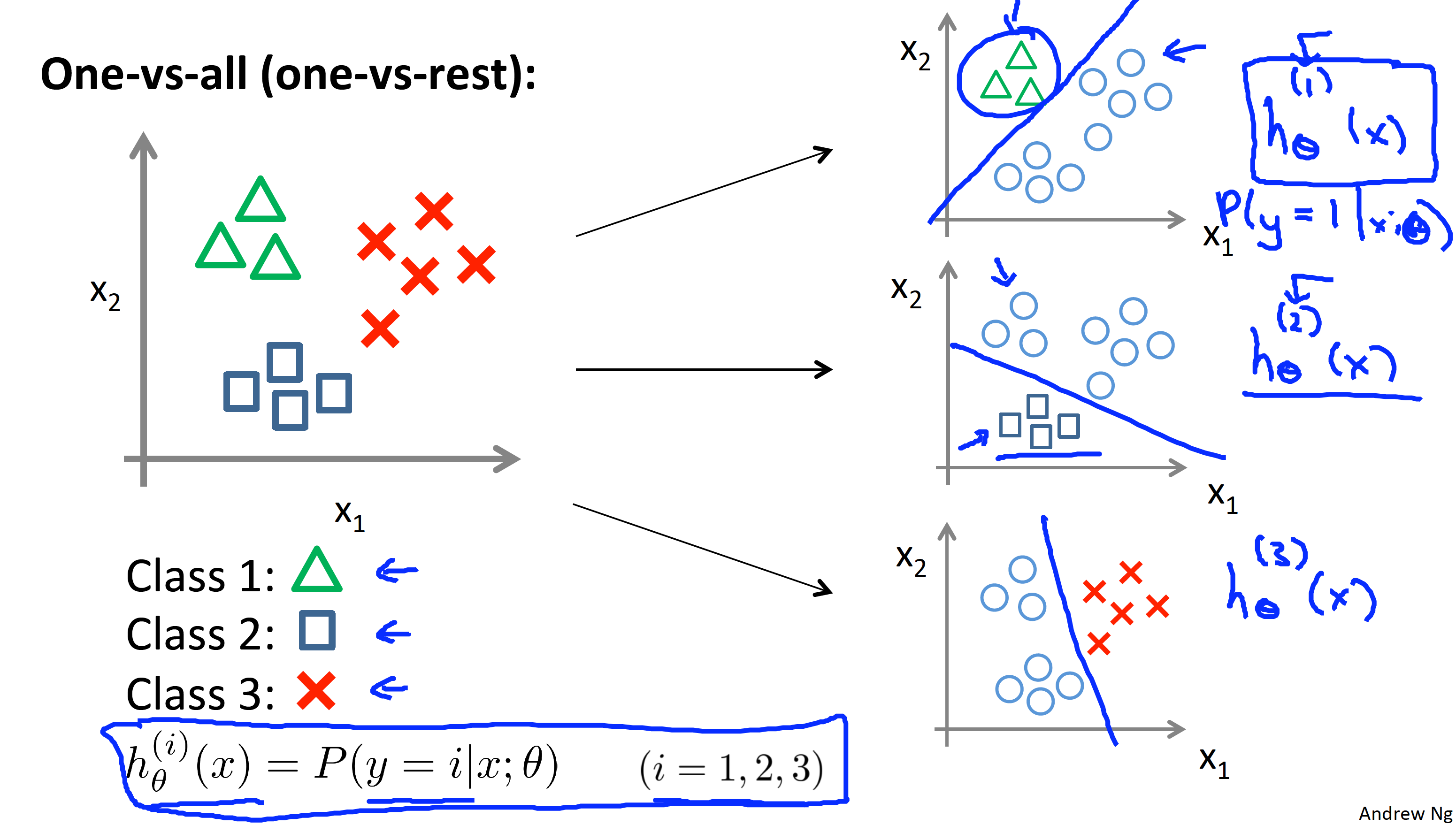

Multi-class classification: One-vs-all

Multicalss classification

- Examples

- Email foldering/tagging: Work (y = 1), Friend (y = 2), Family (y = 3), Hobby (y = 4).

- Medical diagrams: Not ill (y = 1), Cold (y = 2), Flu (y = 3)

- Weather: Sunny (y = 1), Cloudy (y = 2), Rain (y = 3), Snow (y = 4)

One-vs-all details

- Train a logistic regressiong classifier

for each class to predict the probability that - On a new input

, to make a prediction, pick the class that maximises